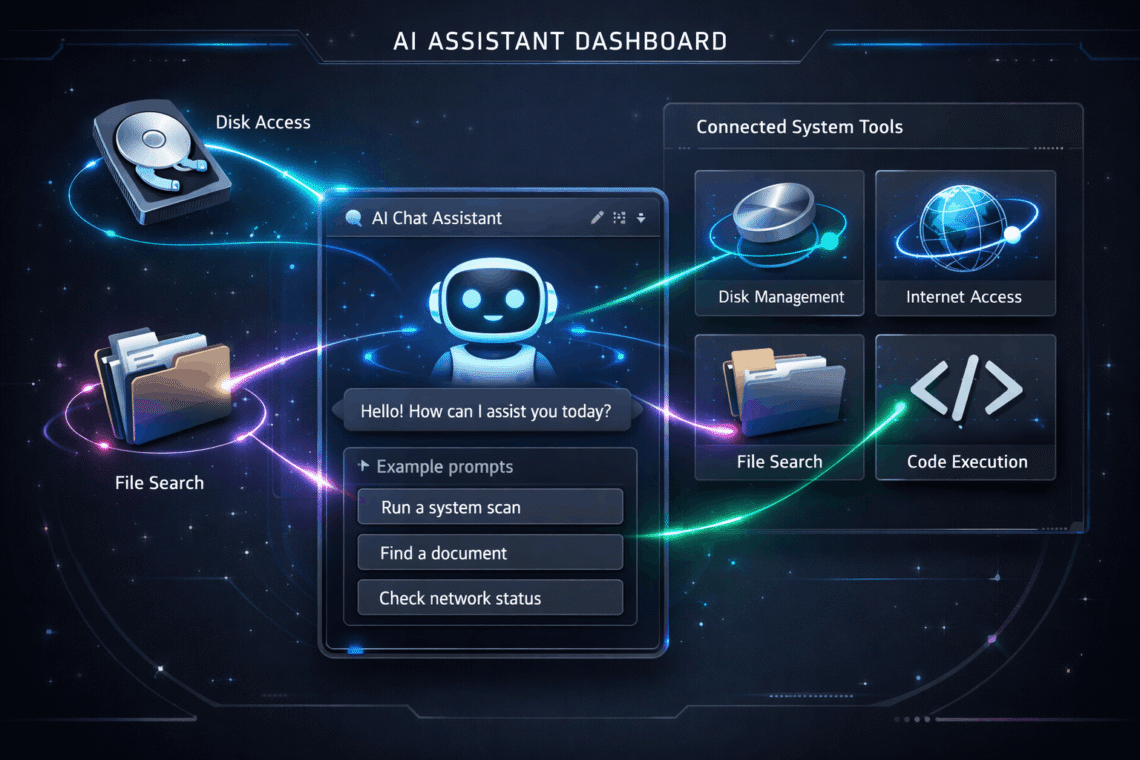

This post is part of the GenAI Series. Gemini Function Calling takes AI from just answering questions to actually getting things done. In this tutorial, I will use the Gemini API with Python to build a simple but surprisingly capable AI assistant. It can check your system status, test internet connectivity, and hunt down files on your machine. This real-world example will help you understand how AI function calls work under the hood. You will also learn how to wire up external tools with Google Gemini and how modern LLMs execute actions instead of just generating text. Introduction What is Gemini Function Calling Function Calling is a way for Gemini…

-

Gemini Function Calling Explained with Python (Step-by-Step Guide)

-

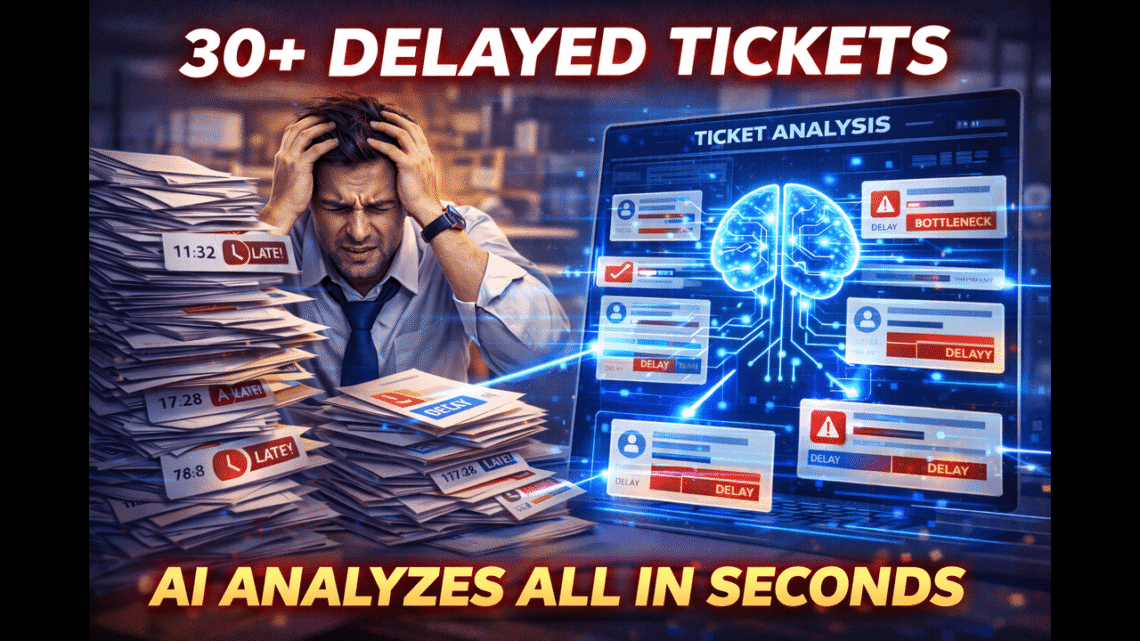

I Built an AI Tool to Analyze Delayed Support Tickets

This post is part of the GenAI Series. A few moons ago, I built a small AI tool to deal with ticket delays at my company. It wasn’t a big, fancy product. It started as a simple experiment, but it worked really well. We were getting negative feedback from clients about tickets not being completed on time. The real challenge was figuring out the actual reason behind each delay. Was it something our team did wrong, or was the delay coming from the client’s end? When you have a ticket with huge comment threads and internal notes, it becomes difficult to go through everything to find the real reason. It takes…

-

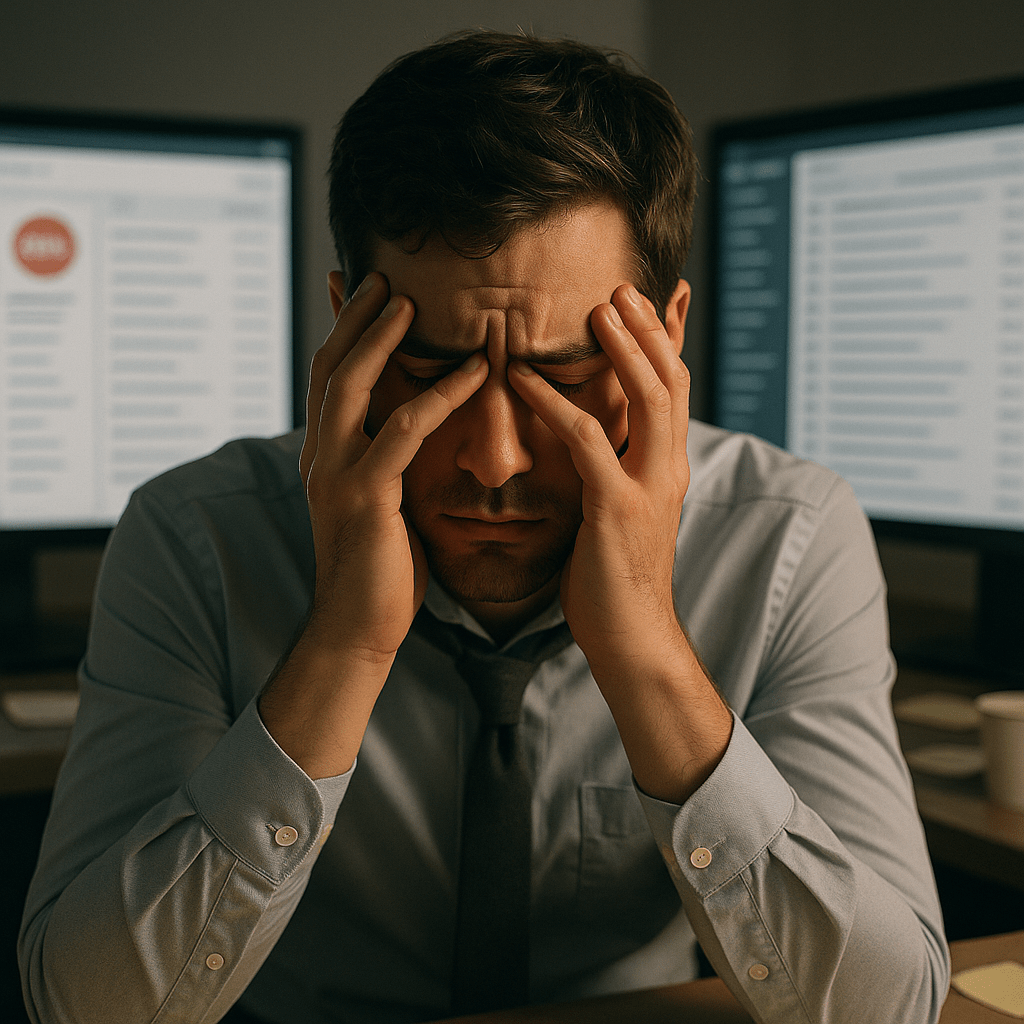

Reimagining Customer Support with GenAI

This post is part of the GenAI Series. If you’ve ever worked in customer support, you know the feeling. It’s 2 PM on a Wednesday. Your inbox is overflowing. A customer just sent their third “following up” email. Your manager wants to know why last week’s tickets are still open. And somewhere in the back of your mind, you’re wondering: Why does this feel so hard? Here’s the thing: Most support teams aren’t failing because people aren’t trying hard enough. They’re struggling because the systems we’ve built over years of “making do” were never designed to handle today’s volume, complexity, or customer expectations. And the stakes? They’ve never been higher. According…

-

How to Implement Guardrails in LLMs (With Practical Examples)

This post is part of the GenAI Series. In March, I received an email from the founder of a website who had created an AI wrapper related to the medical field. He sent me an unsolicited email and subscribed me to his newsletter. I responded to him instantly and asked where he had gotten my email from, but I received no reply. Then I started exploring his website. The first thing I tried was asking it to create a Python code — and it did! I then sent him the following email: As you can see, I responded with the screenshot attached: Guys! The first rule for writing a GPT wrapper…

-

Building Your First Multi-Signal Trading Strategy with RSI and Moving Averages

This post is part of the T4p Series. So in this post, we are going to combine two indicators: RSI and Moving Averages to come up with a strategy. But first, let’s talk about what we mean by a “strategy.” A trading strategy is simply a set of rules that tells you when to buy and when to sell. It’s different from just using individual indicators because it combines multiple signals into a complete system. Whether you use a single indicator or a set of indicators, in the end, we are going to generate buy/sell signals or open long/short positions. When you rely on a single indicator, for instance, RSI alone,…

-

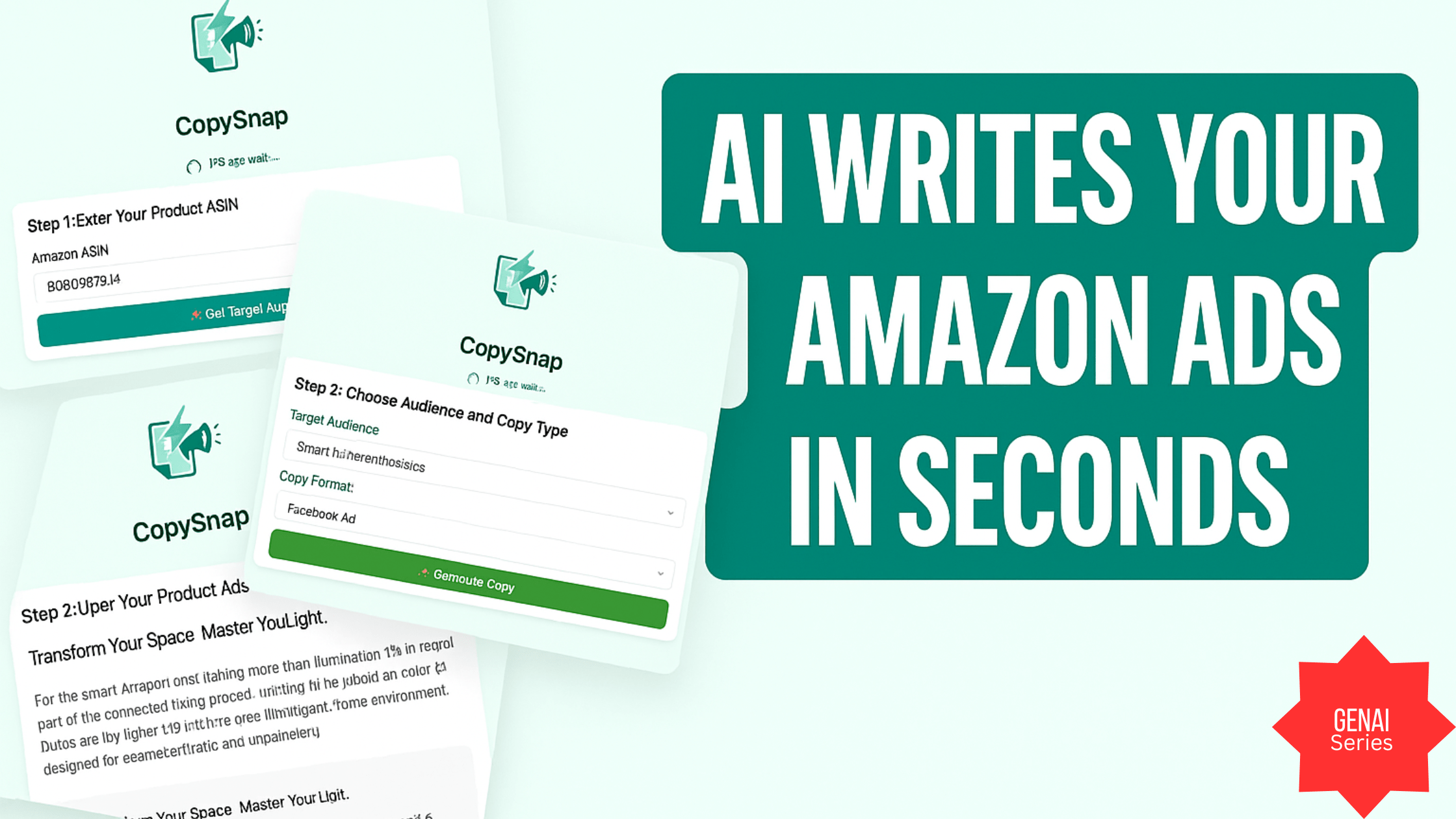

Building an AI-Powered Amazon Ad Copy Generator with Flask and Gemini

This post is part of the GenAI Series. Back-to-back GenAI-related posts, but I could not resist writing this post instead of a trading-related one. A few days ago, I built a small tool that lets you input an Amazon ASIN and instantly generate marketing copy, including Facebook ads, Amazon A+ content, and SEO product descriptions by using Gemini. The frontend is built with Bootstrap + jQuery, and the backend runs on Python Flask. While this was originally aimed at Amazon sellers, it turned out to be a fun exercise in prompt engineering, tool chaining, and turning structured product data into persuasive, persona-targeted content. If you are in a hurry, then watch…

-

Writing Modular Prompts

This post is part of the GenAI Series. These days, if you ask a tech-savvy person whether they know how to use ChatGPT, they might take it as an insult. After all, using GPT seems as simple as asking anything and getting a magical answer instantly. But here’s the thing. There’s a big difference between using ChatGPT and using it well. Most people stick to casual queries; they ask something and ChatGPT answers. Either they will be happy or sad. If the latter, they will ask again and probably get further sad, and there might be a time when they start thinking of committing suicide. On the other hand, if you…

-

Generating Buy/Sell Signals with Moving Averages Using pandas-ta

This post is part of the T4p Series. In the previous post, I had discussed the engulfing pattern. In this post, we will discuss Moving Averages. What Is Moving Average Moving Averages are one of the most widely used indicators. They smooth out price fluctuations by calculating the average price over a specific number of periods, creating a trend-following line that updates as new data becomes available, hence helping traders identify the overall direction of the price movement. Types Of Moving Averages Simple Moving Average (SMA) – Calculates the arithmetic mean of prices over a set period, giving equal weight to all data points Exponential Moving Average (EMA) – Gives more…

-

Using ScraperAPI to bypass Cloudflare in Python

Introduction Cloudflare’s Captcha solutions are one of the biggest hurdles Python developers usually face while writing a web scraper. Cloudflare offers various solutions like bot detection, CAPTCHA challenges (including the newer Turnstile verification), and IP blocking to prevent automated website access for data retrieval. These protections often result in “Verify You Are Human” checks, 403 Forbidden errors, or the challenging 1020 Access Denied responses. In this post of the scraper series, we will learn about Cloudflare and its service that hinders web scraping and how you can use ScraperAPI’s APIs to bypass Cloudflare’s CAPTCHA/protection techniques. Understanding Cloudflare Protection What is Cloudflare? A global network service provider that offers website security,…

-

OpenAI Function Calling in Python: Build a Real Crypto RSI Bot (Step-by-Step Example)

This post is part of the GenAI Series. In the previous post of the GenAI series, I built an adaptive dashboard using Claude APIs. In this post, I’ll introduce the concept of function calling and show how we can leverage it to build a crypto assistant that generates RSI-based signals by analyzing OHLC data from Binance. What is OpenAI Function Calling Function calling in OpenAI’s API lets the model identify when to call specific functions based on user input by generating a structured JSON object with the necessary parameters. This creates a bridge between conversational AI and external tools or services, allowing your crypto bot to perform operations such as checking…