It is not uncommon for developers to interact with remote servers. Beside FTP clients many use terminals or consoles to carry out different tasks. SSH is usually used to connect with remote servers and execute different commands; from running git to initiating a web or db server, almost every thing can be done by using an SSH Client.

Recently I came across an awesome tool called Fabric. It’s a Python based tool that automates your command line activities.

What is Fabric?

From official website:

Fabric is a Python (2.5-2.7) library and command-line tool for streamlining the use of SSH for application deployment or systems administration tasks.

It provides a basic suite of operations for executing local or remote shell commands (normally or via

sudo) and uploading/downloading files, as well as auxiliary functionality such as prompting the running user for input, or aborting execution.

Fabric, runs a tool, fab which actually looks for a fabfile.py or only fabfile, which is nothing but a file contains Python code. Below is the simplest fabfile.

from fabric.api import run

def host_type():

run('uname -s')

In order to run this code you will run following command on terminal:

fab host_type

What actually it will do that it would connect with a remote machine or machines already defined in file and return machine host name, if not, you may pass on machine names as parameter:

fab -H localhost,linuxbox host_type

It will run host_type method on hosts, localhost and host with name linuxbox.

Alright, for this post I am going to create a fabfile that will get into repo folder, add files, commit and push the code. Once it’s done, it will connect remote server(s), pull the code and execute some script file.

Automating local Git

So, in order to perform all tasks I am going to create a fabfile. The very first line in it would.. yeah, importing required libraries.

from fabric.api import *

it will import Fabric based APIs into your script.

Now as I mentioned, we need to deal with a local repo, I am going to create a separate method for it:

def local_git():

print('HI Local Git')

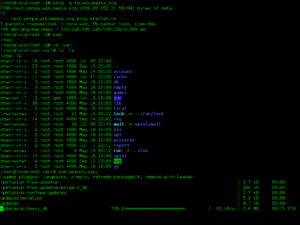

Now before I run the command, there is also a fabric command that lets you see all methods defined, run fab fabfile.py and it will return something like below:

with lcd('/Development/PetProjects/OhBugz/src/ohbugztracker/'):

c = local('git status', capture=True)

# Make the branch is not Upto date before doing further operations

if 'Your branch is up-to-date with' in c.stdout:

print('Already Updated')

I am going to use one of my open source work OhBugz repo, for the purpose( The series about it cover here)

Here I am going to use ContextManager lcd (Local Change Directory). What it does that it navigates to a folder and with same context it runs the git status command. By passing capture=True I am storing the output the command in a variable for further processing.

Now, this is the method I don’t want to be called directly via fab tool. What I am going to do is making wrapper method, named deploy and will be calling local_git() and then remote_git(). So after making final changes local_git() now looks like:

def local_git():

is_done = True

try:

with lcd('/Development/PetProjects/OhBugz/src/ohbugztracker/'):

r = local('git status', capture=True)

# Make the branch is not Upto date before doing further operations

if 'Your branch is up-to-date with' not in r.stdout:

local('git add .', capture=True)

local('git commit -m Initial Commit')

local('git push origin master')

except Exception as e:

print(str(e))

is_done = False

finally:

return is_done

If you know git commands then it should not be unfamiliar for you. local means that all these commands will run on your own machine. If all goes well the function will return True.

Now I am adding main function that will be called by fab:

def deploy():

result = local_git()

if result:

remote_git() # running remote git commands

Cleaner, No?

Automating SSH and remote Git

Now all I want to connect a remote machine and pull the code. Before I do that, I need to set remote host, one of the three options already shared that is passing the names with -H flag, other option is setting env.hosts

env.hosts = ['123.456.789.123']

So if I now run fab deploy it will connect to this host for remote commands. If you ask me I am going to use settings context manager as it gives me more control over the servers I want to use. Unlike local machine where lcd is used to change directory, on remote it uses cd.

def remote_git():

is_done = True

try:

with settings(host_string=host.rstrip('\n').strip()):

with cd('public_html/code'):

run('git stash')

run('git pull origin master')

except Exception as e:

print(str(e))

is_done = True

finally:

return is_done

settings accepting host name, that needs to be connected, once connected it will be running git commands on remote machines.

If you are using ssh config then do add this line env.use_ssh_config = True before connect remote servers and it will read credentials from config file.

Now you have one servers or multiple, it does not matter, a single script will connect each server and run commands accordingly. In case of multiple servers just iterate a list variable with server IPs and perform tasks.

Conclusion

Fabric is a simple and powerful tool that helps to automate repetitive CLI based administrative tasks. By writing python based fabfiles you can save your time by automating your entire workflow.

Complete code can be read here.