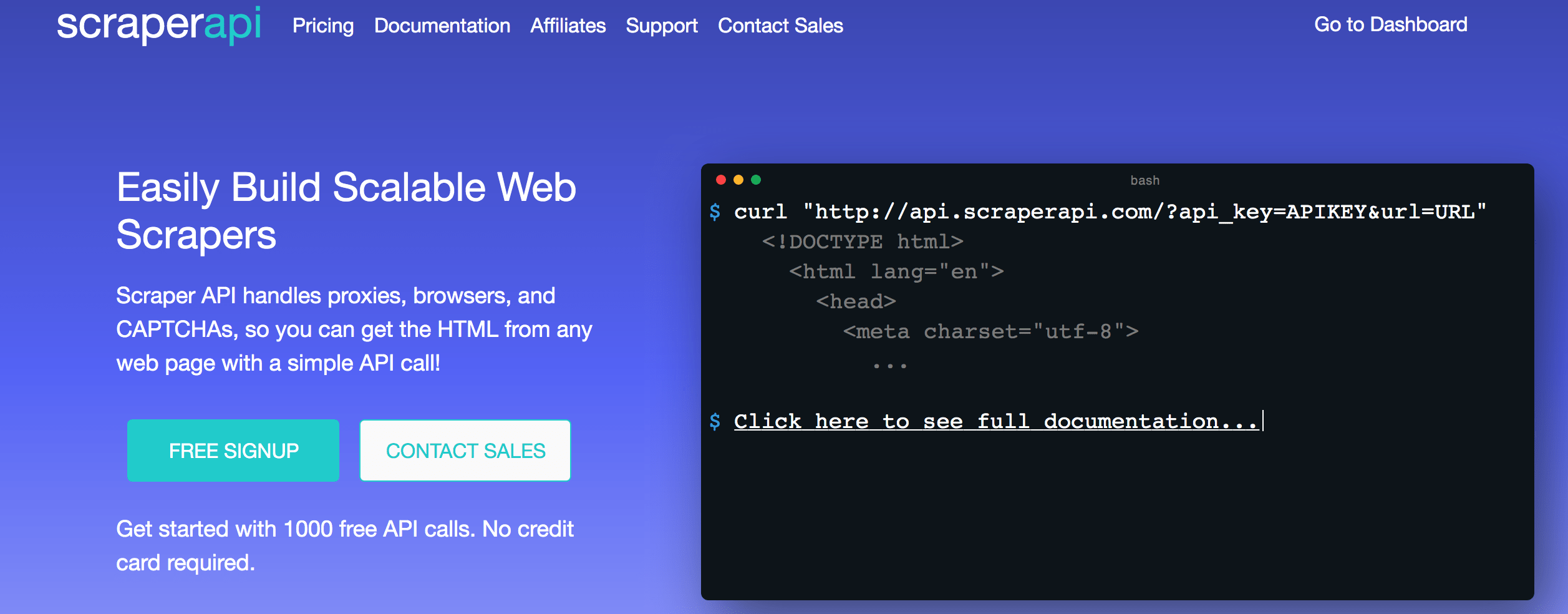

Recently I come across a tool that takes care of many of the issues you usually face while scraping websites. The tool is called Scraper API which provides an easy to use REST API to scrape a different kind of websites(Simple, JS enabled, Captcha, etc) with quite an ease. Before I proceed further, allow me to introduce Scraper API.

What is Scraper API

If you visit their website you’d find their mission statement:

Scraper API handles proxies, browsers, and CAPTCHAs, so you can get the HTML from any web page with a simple API call!

As it suggests, it is offering you all the things to deal with the issues you usually come across while writing your scrapers.

Development

Scraper API provides a REST API that can be consumed in any language. Since this post is related to Python so I’d be mainly focusing on requests library to use this tool.

You must first signup with them and in return, they will provide you an API KEY to use their platform. They provide 1000 free API calls which are enough to test their platform. Otherwise, they offer different plans from starter to the enterprise which you can view here.

Let’s try a simple example which is also giving in the documentation.

import requests

if __name__ == '__main__':

API_KEY = '<YOUR API KEY>'

URL_TO_SCRAPE = 'https://httpbin.org/ip'

payload = {'api_key': API_KEY, 'url': URL_TO_SCRAPE}

r = requests.get('http://api.scraperapi.com', params=payload, timeout=60)

print(r.text)

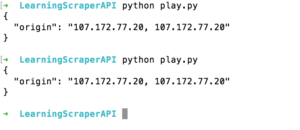

Assuming you are registered and have got an API which you can find on the dashboard, you can start working right away after having it. When you run this program it shows the IP address of your request.

Do you see, every time it returns a new IP address, cool, isn’t it?

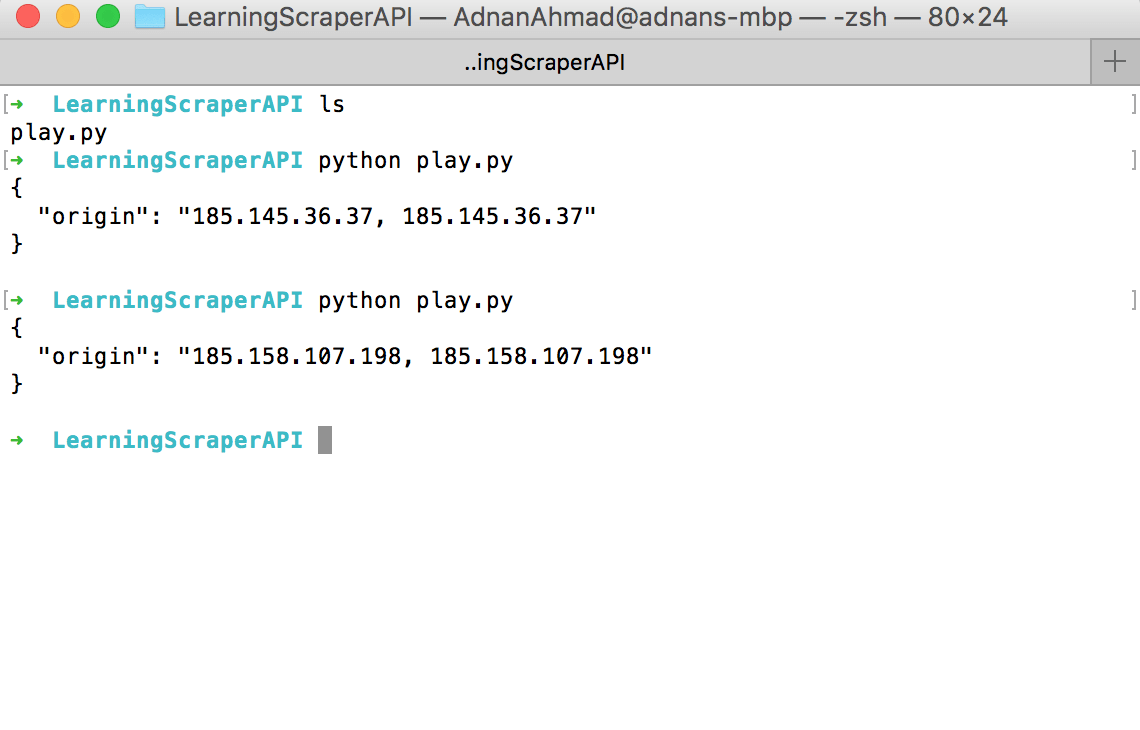

There are some scenarios where you would like to use the same proxy to give the impression that a single user is visiting a different part of the website. For that, you can pass session_number parameter in the payload variable above.

URL_TO_SCRAPE = 'https://httpbin.org/ip'

payload = {'api_key': API_KEY, 'url': URL_TO_SCRAPE,'session_number': '123'}

r = requests.get('http://api.scraperapi.com', params=payload, timeout=60)

print(r.text)

And it’d produce the following result:

Can you notice the same proxy IP here?

Creating OLX Scrapper

Like previous scraping related posts, I am going to pick OLX again for this post. I will iterate the list first and then will scrape individual items. Below is the complete code.

payload = {'api_key': API_KEY, 'url': URL_TO_SCRAPE, 'session_number': '123'}

r = requests.get('http://api.scraperapi.com', params=payload, timeout=60)

if r.status_code == 200:

html = r.text.strip()

soup = BeautifulSoup(html, 'lxml')

links = soup.select('.EIR5N > a')

for l in links:

all_links.append('https://www.olx.com.pk' + l['href'])

idx = 0

if len(all_links) > 0:

for link in all_links:

sleep(5)

payload = {'api_key': API_KEY, 'url': link, 'session_number': '123'}

if idx > 1:

break

r = requests.get('http://api.scraperapi.com', params=payload, timeout=60)

if r.status_code == 200:

html = r.text.strip()

soup = BeautifulSoup(html, 'lxml')

price_section = soup.find('span', {'data-aut-id': 'itemPrice'})

print(price_section.text)

idx += 1

I am using Beautifulsoup to parse HTML. I have only extracted Price here because the purpose is to tell about the API itself than Beautifulsoup. You should see my post here in case you are new into scraping and Python.

Conclusion

In this post, you learned how to use Scraper API for scraping purposes. Whatever you can do with this API you can do it by other means as well; this API provides you everything under the umbrella, especially rendering of pages via Javascript for which you need headless browsers which, at times become cumbersome to set things up on remote machines for headless scraping. Scraper API is taking care of it and charging nominal charges for individuals and enterprises. The company I work with spends 100s of dollars every month just for the proxy IPs.

Oh if you sign up here with my referral link or enter promo code adnan10, you will get a 10% discount on it. In case you do not get the discount then just let me know via email on my site and I’d sure help you out.

In the coming days, I’d be writing more posts about Scraper API discussing further features.